Autonomous agents behave fundamentally differently from humans or “faster users,” and that difference reshapes the demands placed on your database. While traditional databases were optimized for human-speed interactions, agents can overwhelm them like a swarm. For example:

Agents are chatty. They break work into many small reasoning steps. Each step might read context, write intermediate state, store memory, enqueue tasks, or log outcomes. Instead of a single transaction every few seconds (human pace), you now have dozens or hundreds per second per agent.

They run concurrently. Agents don’t politely take turns like users clicking through a UI. Instead, they constantly spawn subtasks, coordinate across tools, and operate in parallel. A single workflow can generate bursts of simultaneous reads and writes.

They retry automatically. If an API call fails, if a lock times out, if a transaction aborts, they don’t give up. They retry. That retry behavior amplifies load patterns and turns small bottlenecks into cascading pressure.

They scale elastically. When traffic increases, it is not just a few additional users. You may need to support hundreds or thousands of agents. As a result, the workload can grow much faster than expected.

Why Traditional database scaling breaks for AI agents

For decades, databases were architected around human-paced applications. The dominant assumptions were simple:

users pause between actions

traffic grows gradually

contention is manageable

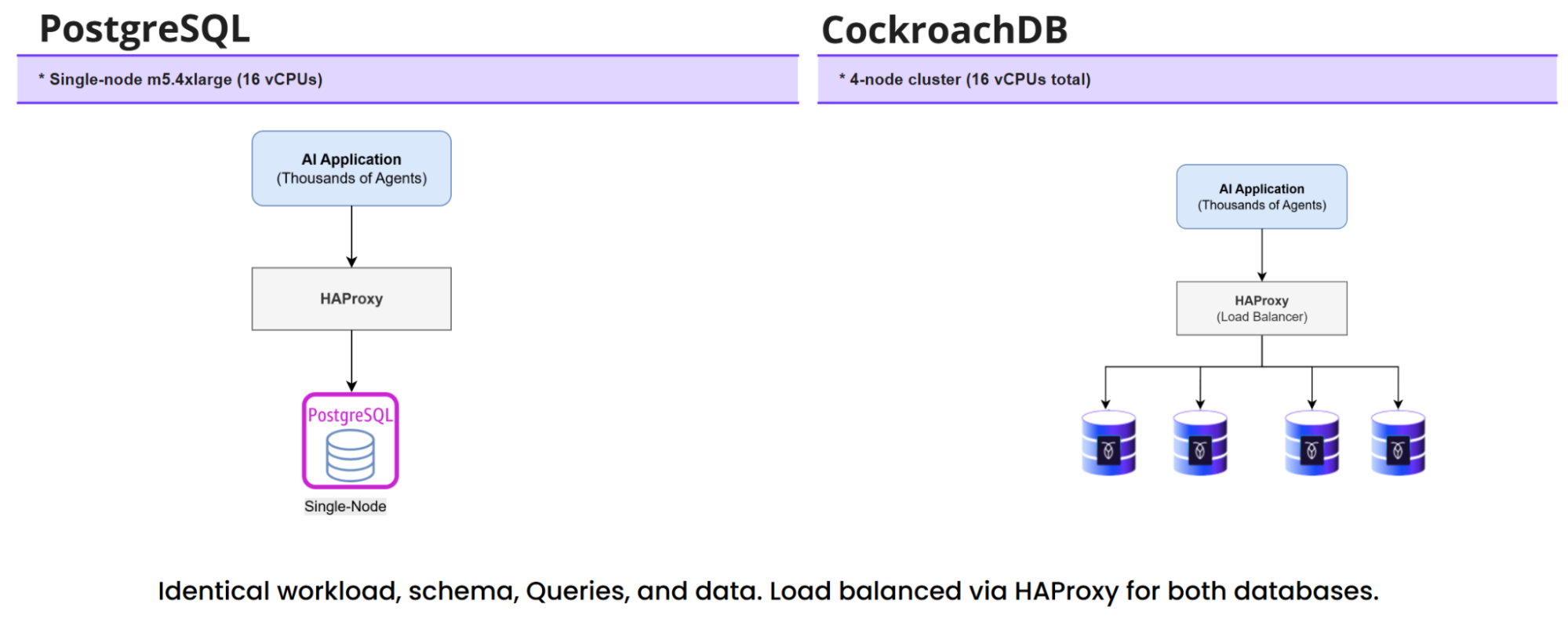

Traditional PostgreSQL deployments reflect that world: a single primary node coordinating writes, replicas to offload reads, connection pools to smooth bursts, and operational playbooks built around predictable load patterns. That model works well when requests arrive at human speed.

However, will it hold when the “users” are autonomous agents generating hundreds of concurrent transactions, retrying aggressively, and scaling out in bursts of thousands? Can a single-node write architecture effectively absorb that kind of sustained, swarm-like pressure without becoming the bottleneck itself?

Simulating AI agent workloads at scale

As a Sr. Partner Solutions Architect at Cockroach Labs, I've seen firsthand how agentic workloads break traditional database assumptions. To move this discussion from theory to reality, we evaluated how both PostgreSQL and CockroachDB behave when thousands of autonomous agents interact with them concurrently.

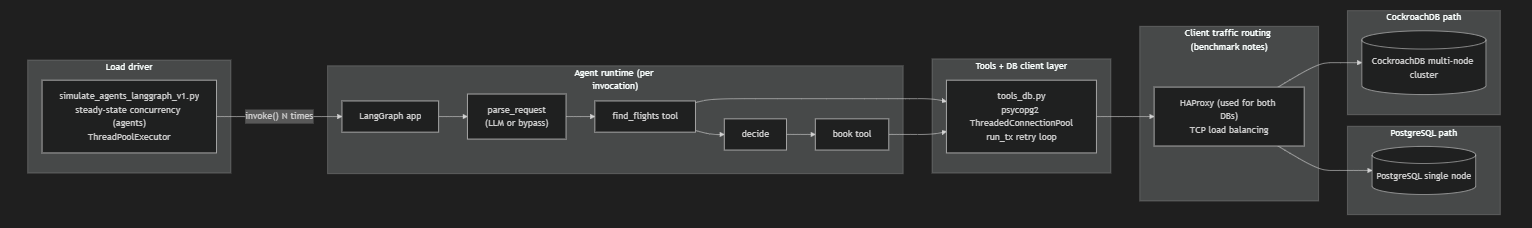

Rather than using a synthetic benchmark, I built a demo application that emulates a travel booking chat agent. The agent accepts a natural language prompt such as:

“Book me a flight from LDN to JFK for 1 seat.”

Under the hood, the workflow is transactional and stateful:

The agent parses intent→It queries available flights→It selects a candidate flight→It attempts to book a seat→It commits the transaction (or retries if it fails).

This closely reflects the behavior of real agentic systems, which are concurrently executing:

multiple reads

conditional writes

transactional guarantees

automatic retries

Load testing with a multi-agent CLI harness

For consistency and performance isolation, we removed live LLM parsing from the scaling tests to avoid token overhead and external variability.

The CLI harness internally runs the compiled LangGraph workflow. The architecture mirrors a modern agentic stack using:

LangGraph for deterministic state orchestration

LangChain-style tool execution for structured actions

MCP-style modular tool interfaces to interact with database

For a deeper dive, explore our article on Agent Development with CockroachDB and LangChain, and read through the official LangChain documentation regarding integration with CockroachDB.

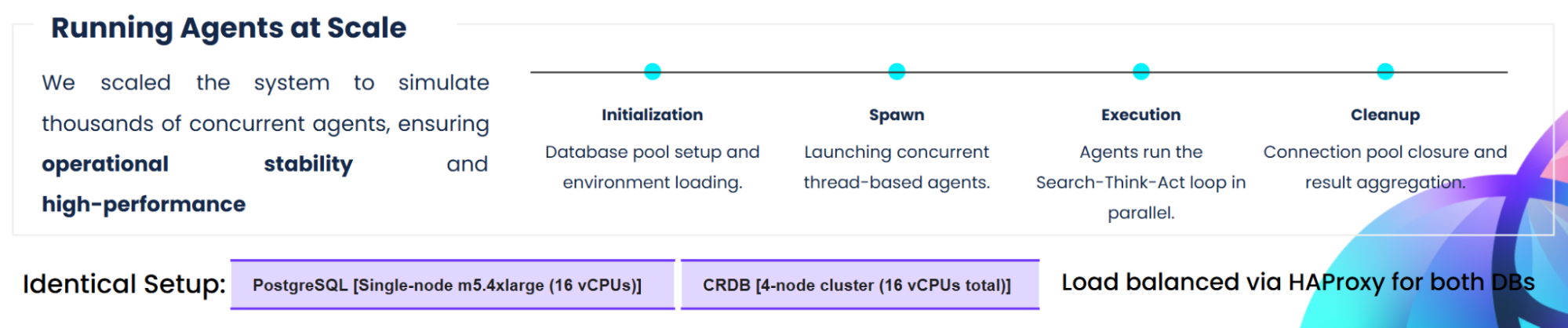

The goal of the experiment was not to determine which database is faster at low user counts. At a small scale, most systems perform similarly. The objective was to understand what happens when thousands of parallel agents continuously execute transactional workflows without pause.

Autonomous systems do not behave like human users. They do not wait between clicks, or throttle themselves intuitively. They operate in parallel, retry immediately on failure, and sustain pressure as long as compute is available.

Test environment and configuration

Client configuration:

Tests were run from a Windows instance or jump server with below details.

Hardware Details:

Memory Configuration:

Additional Settings:

PostgreSQL

Connections: max_connections is set to 5000.

Parallel Execution: Configured with 8 max_worker_processes and 8 max_parallel_workers.

Durability: wal_level is set to replica with synchronous_commit on.

Performance results: PostgreSQL vs CockroachDB vs Cockroach Cloud

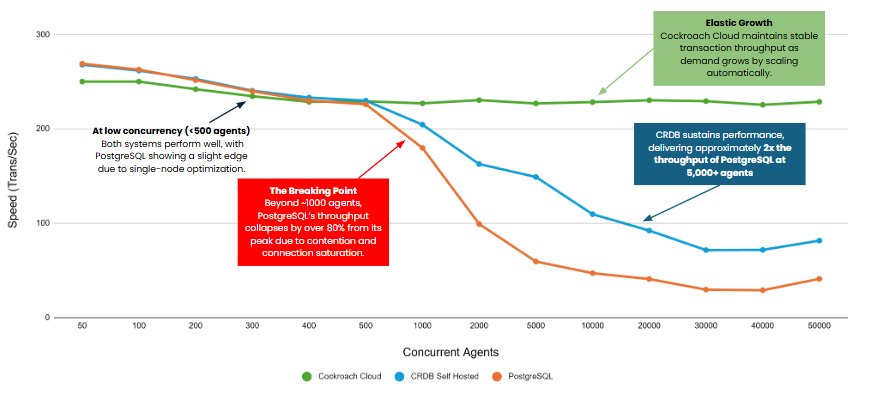

CockroachDB delivers stable, high throughput for large-scale AI agent loads, while PostgreSQL's performance sharply declines with increased concurrency.

As noticed from the graph above, up to about 500 agents both systems track very closely, with just a few percentage points’ difference. PostgreSQL has a slight edge at the very low end, which is expected for a single-node system under modest load. The first clear inflection point appears between 700 and 1,000 agents, where PostgreSQL starts shedding throughput more aggressively while CockroachDB flattens and holds relatively steady.

By 5,000 agents, the gap becomes significant: CockroachDB is around 130 ops/sec versus PostgreSQL at roughly 57 ops/sec, about a 2.3× advantage. At 10,000 agents, CockroachDB remains near 129 ops/sec while PostgreSQL drops to about 42 ops/sec, widening the gap to roughly 3×.

This is where PostgreSQL is clearly in saturation territory. Even at 50,000 agents, CockroachDB sustains about 128 ops/sec compared to PostgreSQL’s ~67 ops/sec, still close to a 2× advantage.

When we test the same workflow on CockroachDB Cloud, which offers an elastic deployment model that scales based on demand, the system maintains a more consistent performance profile. Through testing, we observe that performance remains consistent at ~230 ops/sec, and as load increases to higher levels of concurrent agents, the system automatically scales compute (vCPUs) to sustain that throughput. This allows the database to maintain a steady performance profile without the degradation seen in single-node systems, even under highly bursty, agent-driven workloads.

CRDB Prevents Latency Spikes at High Concurrency

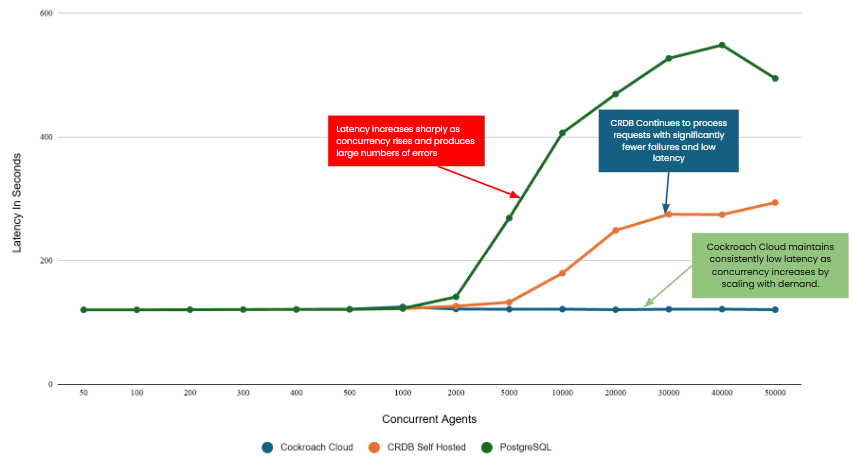

At 5,000 agents, PostgreSQL's latency is 2× to 2.3× higher than CockroachDB's. By 10,000 agents, it approaches 3× higher, showing clear saturation.

This chart tells the other half of the story. While throughput shows how much work gets done, latency shows what each individual agent experiences. It’s a coarse but very practical way to visualize what saturation feels like: Adding more agents eventually stops increasing useful work, and instead just increases waiting time.

Up to about 1,000 agents, both systems track very closely on latency, with differences staying within a relatively narrow range. The first clear inflection point appears between roughly 2,000 and 4,000 agents, where PostgreSQL’s latency begins rising more aggressively while CockroachDB’s curve increases more gradually and remains comparatively controlled.

Another way to interpret this: As concurrency rises, “adding more agents” gradually becomes “adding more idle time.” Instead of completing more bookings per second, agents spend increasing amounts of time blocked on database work.

When we test the same workflow on CockroachDB Cloud, latency remains consistently flat even as concurrency increases. As load grows, the system scales compute to absorb demand, keeping response times predictable and avoiding the sharp spikes seen in single-node systems under contention.

Under heavy AI agent load, PostgreSQL accumulates waiting time much faster. While CockroachDBis not immune to saturation, it grows more purposefully and maintains a significantly lower mean wait time at scale.

Choosing a database for AI agent workloads

PostgreSQL remains an excellent and reliable database for its designed purpose: powering applications within the architectural paradigm of a single server. However, its single-node architecture runs counter to the core requirements of modern AI systems: concurrency, resilience, and geographic distribution. Meeting agentic AI’s many needs with PostgreSQL can require complex workarounds.

A distributed SQL database like CockroachDB provides a strong architectural foundation for today’s agentic AI infrastructure needs. Its strengths as a database for AI agents include:

elastic horizontal scaling

built-in geo-distribution

What once may have seemed like niche features are now necessities for mission-critical AI systems. By building these capabilities directly into the database, CockroachDB avoids complex patchwork architectures to deliver a simpler, more resilient data layer.

For workloads that are inherently unpredictable, where agent concurrency can spike and recede rapidly, CockroachDB Cloud extends these benefits with an elastic, serverless deployment model that automatically scales compute based on demand. This allows teams to maintain consistent, predictable performance without manual capacity planning, even as workloads fluctuate in real time.

Choosing the right database for agentic AI is a strategic decision. It determines whether an organization can move AI initiatives from fragile proof of concept stages to resilient, production-grade platforms that can scale with both demand and ambition.

Learn how CockroachDB supports high-concurrency AI workloads. Speak with an expert.

Try CockroachDB Today

Spin up your first CockroachDB Cloud cluster in minutes. Start with $400 in free credits. Or get a free 30-day trial of CockroachDB Enterprise on self-hosted environments.

Tushar Ghotikar is Sr. Partner Solutions Architect at Cockroach Labs.